We’ve got you covered

Is your business IT stressing you out? You didn’t get into business to deal with IT problems such as cybersecurity and hosting a website, but somebody’s gotta do it. With FUNCSHUN as your IT partner, every aspect of your technology will be monitored and maintained.

Here’s what we can do

for you

Virtual Private Server &

Website Hosting

Gain more privacy and more control over your website with our hosting services

VoIP

Enjoy the benefits of a modern communications solution that is tailored to suit your company’s needs

Our team has over 10 years of combined experience working in the Miami area, but don’t just take our word for it.

Making your IT problems a thing of the past will be quick and painless with FUNCSHUN

Our fast and hassle-free services are ideal for small and medium businesses in Miami

Consult

Book a FREE consultation and we’ll

learn about your business goals to

identify your IT needs

Plan

We’ll present a strategic plan to

implement the solution to take your

business where it needs to go

Execute

When we come to an agreement, our

engineers will execute the plan so you

can enjoy the benefits of smooth IT

immediately

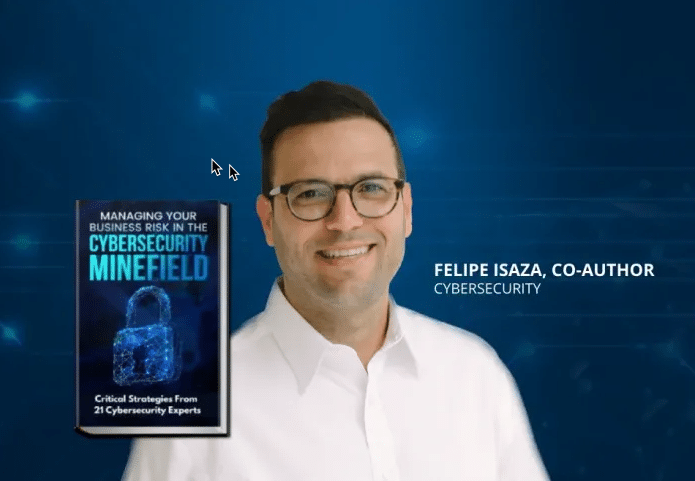

Check out our FREE Managed Services eBook to learn more!

If you’re not 100% clear on what a Managed Services Provider (MSP) does or you’d like more details before making a decision, that’s understandable. We offer this no-cost, no-obligation FREE eBook.

Industry partners

Where our customers are located